The caterpillar shot in the teaser was certainly one of the most time consuming shots we have done at Pataz, specially considering it’s only an 87 frames shot featuring a glorified worm in a stick :)

No matter how good a 3D software is it will never be prepared for every possible situation a shot might ask for. As a technical artist I’m in charge of figuring out all those little solutions needed to “resolve” a shot. Since an open movie is all about sharing the experience of creating it on this first post I’ll be writing about how the pupils where animated! (you might want to watch that clip again if you missed it).

The wish:

Sarah wanted the eyes to look similar to an insect’s eyes. Notice how the light over the micro “prisms” creates a kind of black hole in front?

The idea was to exploit this characteristic and turn it into the pupils of the character. The common approach for animating pupils is to model them with geometry and we actually tried this way by creating a 2 layers model of the eye, the first was a glassy transparent mesh an a few millimeters inside was another mesh with a hole in it. This kind of worked but it didn’t give us the exact textured look we where looking for. We ended up using a simple single surface mesh with textures.

The problem:

The problem with textures is animating them.. Blender has a million ways to animate vertices but zero native ways to animate a texture! Sure we could animate an offset in the mapping parameters but that’s not enough to deform and manipulate the shape of the pupils. The obvious answer here was animating the UVs and if you’re thinking about the AnimAll addon then kudos to you, you’re very well informed ;) This addon exposes hidden powers of Blender and lets you directly animate things like meshes, curves and yes, UVs!

The catch:

Since the days of Big Buck Bunny we have a way to reuse a character in multiple scenes by linking it from a single character blend file. After the link is made we make a local copy of the control armature(s) and animate on it, this local copy is called a proxy. This feature is pretty much essential in any production and a rigger must ensure everything in the character that is animatable is accessible from the proxy.

Sadly the proxy system is limited to object and bone level transform and does not provide access to mesh data! Gah, so close!

The solution:

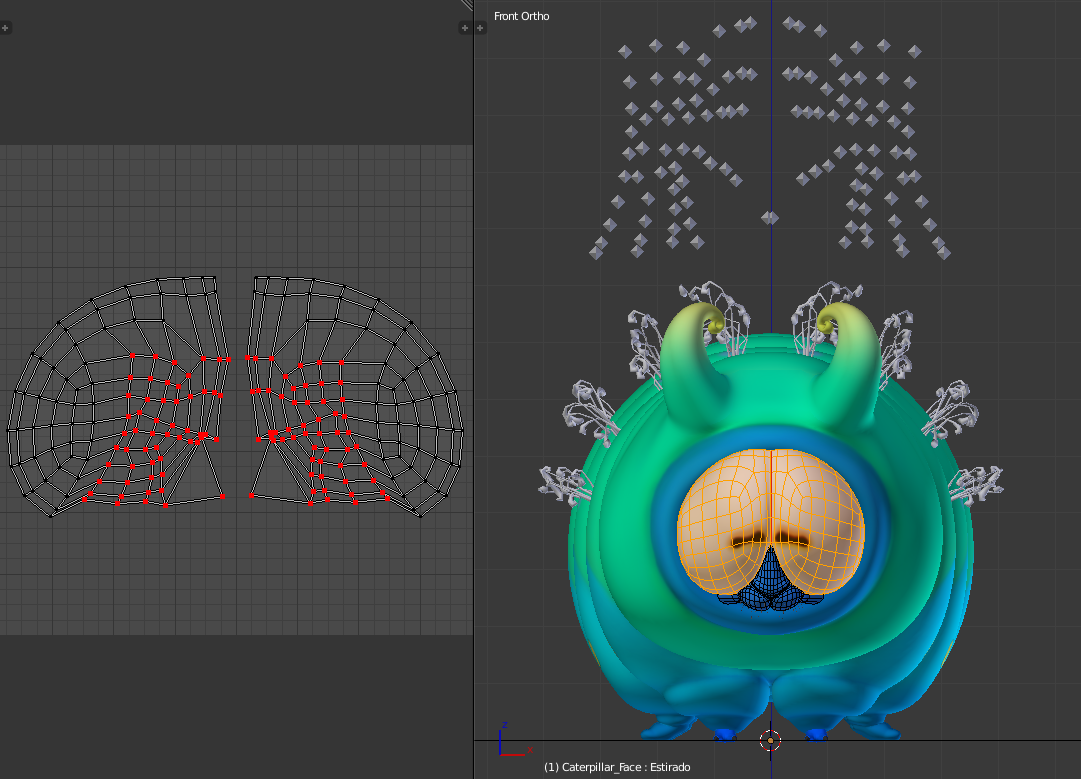

Luckily since the Blender 2.5 re-factor you can be almost sure of one thing: if something is animatable it is also drivable! This means that in theory we could assign drivers to each of the UV points and control them with bones, which are totally proxy-friendly. Of course this is easier said that done since each vertex of a mesh would require 8 drivers in the UVs to work… wait WHAT?? Here’s the proof:

In a typical case a vertex is shared by 4 adjacent faces, this means the UVs will store 1 point for each of those faces. Multiply that by 2 (U+V) and you get 8 drivers! This is certainly not something you’d like to do manually so at about midnight I started hacking a python script to automate this ^_^’

At about 3:00am it actually worked! For each of the pinned vertices in the UV, the script creates a bone and assigns drivers pointing to that bone. This solution turned out to be even nicer to animate than direct UV manipulation since normally the effect in the texture when moving UV points would be “reversed”. For example: if you would like to create an arc pointing upwards in the pupils you would need to move the UVs in the opposite direction. By creating the drivers with inverted values (1 = -1) we can make the texture deformation match the bone transformation perfectly!

Here’s the full script in case you need it too. Beware it’s a production script and as such it doesn’t have a nice UI or anything and you might need to change a couple of names in the first few lines :)

http://www.pasteall.org/50381/python

As a final note I’d like to mention that improving the Blender’s proxy system is one of the big tasks targeted for Gooseberry development and one of the reasons we need to support this project! There is no better way to push Blender into the future than passing it through the fire of an open movie.

keep blending in,

Daniel Salazar – patazstudio.com

Gorgeous work guys, im in love with that piece, to me out of the trailer is the best in every way, lighting, animation, shading, etc.

Kudos, on the piece and the solution you guys came up with

Cheers

that feeling when you try to write a new code that does something totally knew, you work for hours, and when it works!! that feeling :D (and its much greater if it nearly impossible )

thank you for the code :), I’m sure I might use it in the future.

Exactly! Jumps of joy at 3:00am :D

I got goosebumps.

Awesome. Such an informative post, thanks for sharing!

This is exactly the kind of stuff you learn the most from (how UVs work, what can or can’t be driven, etc).

And its great to hear you’re also pinpointing the issues that need to be resolved with the linking system and that the project will help improving them.

Cheers and keep up the good work!

Couldn’t you have just animate the pupil in a 2D program (such as AfterEffects or an open source equivalent) and use an Imagesequence as texture for the pupil…? The described way sounds awfully inconvenient.

Hi Jan, well sure you “can”, and for a shot like this it could be fine, but imagine doing that for a full shortfim, and also taking into account all the changes/reviews etc of the animation process. Then it really becomes more of a pain to do it with a video texture. It’s very valuable to me that the facial animation is part of an “action”. That’s my opinion anyway. This could indeed have been solved in a few other ways :)

okay, thats definitly a point. it would be quite a pain to puzzle things together from different programs.

and no matter which way you choose, it’s the result that counts: And the shot you presented here is just gorgeous :)

Good solution but it would have been much easier with a support tool for vectorial animated textures.

That would be quite something! To use real time 2D curve as a texture.. but I can’t think of a good way to implement this so far, will ask the coders :)

Yes, 2D curves and 2D shapes as textures … animated also, Blender 2D performance :-)

There was an old patch to implement SVG as textures but I’m afraid it missed

I think M@ya has already implemented.

I found It:

Svgtex is a Blender plugin. It uses content of *.svg files as textures. This component is free (available on General Public License), Open Source code

http://airplanes3d.net/downloads-svgtex_e.xml

there’re so many secret behind the UI, nice catch!

Doesn’t the “hole” in real insect eyes actually appear to follow you as you move around?

Nope, we where “inspired” by that optical effect in the insect’s eyes but we used it like it was a pupil :) So it doesn’t follow the camera.

I achieve this kind of effect (pupil dilatation) by using a shape key + edge slide to be sure to match the gemetry and then I use the drive. I’m not sure if this method should work in this case

I have dreamed oof getting some courses bout this topic ever sincxe I started developing interest in it and now

that I’ve been fortunate tto be one of sevral readers of your weblog onn this important

matter. My dream of bbecoming an expert just like you iss syarting to become a reality.

I am happy to have the opportunity to thank you on my own for your generosity.

Thank you very much

Could you make a more in deph video about how the script works?

It would be really intresting how you get acsess to the uv data and drive that with drivers.

Hi, Daniel! :D

I think that – again – you solved my problems! :)

Remember my use of the AnimAll addon to create view-based deformations on characters using lattices? I’ll adapt this script to make this solution production ready, creating the controllers for each lattice point automagically! :D

Thanks a lot! (I’ll show you when it’s done)

Standard fare is a bowl of diced avocado with cherry tomatoes and sliced cucumber.

If we eat raw food that helps digest itself;

we don’t have to draw on these resources and can actually live longer,

healthier lives. Today however, a large percentage of the foods we eat

use refined sugars like high fructose corn syrup (HFCS) to enhance

the taste.

i ran into the same case for my project and i can’t figure out how to make the script works. Any in depth explanation is appreciated :)