This morning I had a first kick-off meeting with Campbell, Sergey, Lukas and Antony – to evaluate possible development targets for the film. We really hope to be able to tackle a number of issues that already are being postponed too long.

This morning I had a first kick-off meeting with Campbell, Sergey, Lukas and Antony – to evaluate possible development targets for the film. We really hope to be able to tackle a number of issues that already are being postponed too long.

-

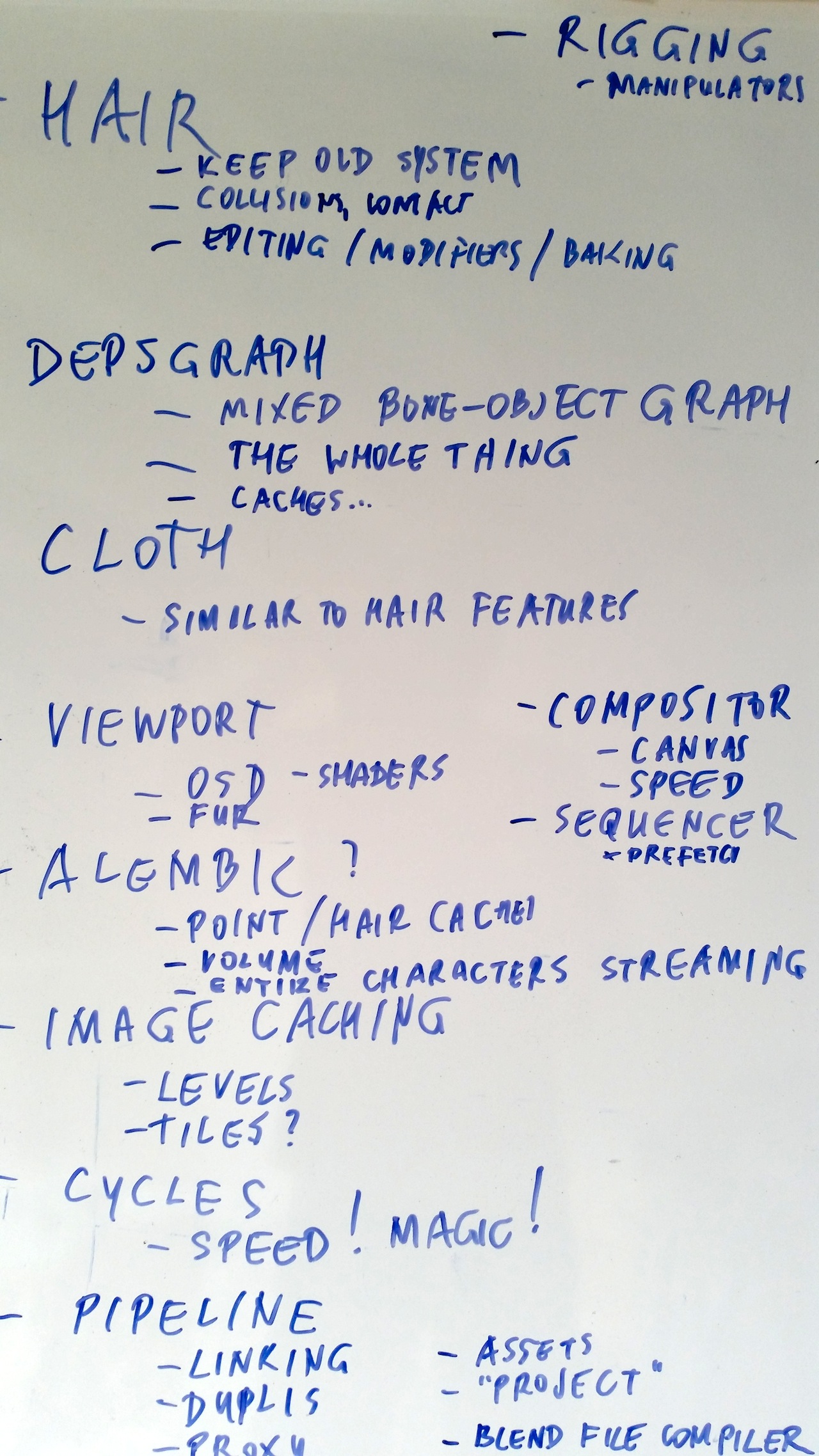

Hair simulation

Lukas summarized the progress with hair code so-far, the issues and the doubts. Main issue is that there’s complicated existing code that sortof works, main doubt is that there are designs already for a new particle/hair node system. When is work on the existing code a waste of time, and how feasible is it to make a new system from scratch?

Decided was that Lukas keeps working on the existing system, and work with the artists here to create a final-film quality hair sim shot a.s.a.p.

-

Object Nodes for transform, constraints, drivers, modifiers.

Well. Let’s add particle and hair nodes in the mix too! But it’s a too big project to expect results for within a few months. Lukas will work on this part time (half day per week or so) to make a prototype – if he has time and energy left!

-

Hair sim editing

“The best sim is no sim” – this whole simulation feature should be nearly invisible and in perfect artistic control. So we need tools to manage stable contact-collisions, set keyframes for hair guides, bake and edit caches. Or even some kind-of modifiers with hair-vertex-group weight control.

-

Dependency Graph

Sergey already worked on threaded object-updates, and will further check on handling and solving dependencies between transform and modifier updates. Or even just solve the whole deps issue enitrely – including materials, nodes, point caches, and so on.

He’ll be using work as done by Joshua Leung for GSoC last year. Joshua has accepted a Development Grant to work with us in Nov/Dec as well. We want this to be solved before final animation on shots start in January. Rather sooner though – riggers totally depend on this to work.

-

Cloth sim

One of the main film characters is a person in a suit. Simulations for regular clothing are the hardest ones to get right, but can be incredibly rewarding and time saving for the animators. Issues here are similar to hair sim – Lukas will check on upgrading this as well, including good contact/collision handling and properly integrating Bullet physics.

-

Rigging and animation tools

Antony started work on a “custom manipulator” system, which technically has been named “window manager Widget” now. The system will allow to directly hook up an event handler with a 2d or 3d item in a view. Everything can drive an operator then!

Imagine controls in Blender for for anything (for example a lamp spot size widget, spin tool rotation widget, a scale widget for selection in dopesheet editor). Widgets can even be parts of an existing mesh model, so riggers can use it to make invisible input ‘widgets’ for posing.

Sergey will check on the “Implicit Skinning” code – which should be available as GPL-compatible soon.

Note: Daniel Salazar and Juan Pablo Bouza will be our riggers, they are in daily contact with us on features and design topics. (And check the bf-animsys list please!)

-

Viewport upgrade (OpenGL 3, 4)

A good quality preview of characters and shot layouts is very helpful for a film project – a real time saver even, especially with real-time Subdivision Surfaces and real-time fur drawing. A side topic to look at is to work on improved (editing of) shaders in the viewport – for modeling as well as preview rendering and of course for the GE.

Antony is already working on this, together with Jason Wilkins (GSoC Viewport project) and Alexander Kuznetsov.

-

Alembic support

We should really work on unifying the use of animation caches in Blender – including support for entire characters, with particles, hair, fluid and smoke. Having such caches stream in real-time in Blender would be much faster and prevent issues with instancing, duplication and linking issues as well.

Campbell will work on this further.

-

Cycles

Well – basically we only have 1 real problem with Cycles rendering. Speed!

Sergey has a couple of ideas he will be starting to work on first – especially for more efficient sample schemes. We also discussed a couple of ways to optimize renders using coherence better (or just simply bake things automatically).

We have an offer from Nvidia to use a massive cluster (16 x 8 of their best gfx cards) to render the entire film with. Will be tested and checked as well.

-

Image Cache

We had this feature working for the 2.5 render branch (for Sintel) already. It means that Blender (or our asset system) should manage automatically the resolution levels of image files we need, especially to handle the larger textures – which can easily go into 10k x 10k pixels. In many cases (like viewport work) you don’t need to load all the large files anyway. Campbell checks on this.

-

Pipeline

Blender’s dynamic .blend linking feature has to get in control much better – with a UI to manage it, update things, split or merge, pack or unpack, etc. We need ways to define “assets” for fast re-use, for versioning and for ‘levels’ or resolutions (low res character, high res final characters). Linked data should also work with partial local overrides (called “Proxy” now in Blender) – so you can locally pose characers, or to change just 1 parameter of a shader system.

Then there’s a need to define a “project” in Blender, to link Blender files together with a root path to work with – for example.

Last but not least – we’ll check on coding a “.blend file compiler”. This is code that can split up .blend files (in assets) and re-assemble it again – for example based on an artist’s job description in a project. On submitting the job back, the compiler then simply splits up the job again and only stores the relevant piece. Probably – hopefully – this compiler can bypass a lot of problems we have with linking and proxy now.

Campbell will start working on the pipeline with Francesco, next week.

-

Compositor

The compositor still lacks a a good way to define and use coordinate spaces (placement of node images inside a canvas). Speed is always an issue too! Most likely Sergey will be working on this.

-

Sequencer

Antony already has been fixing quite some issues here for Mathieu (who did the animatic storyboard edit). Aside of general usability issues, he’ll be working on bringing back threaded pre-fetch.

-

Modeling and sculpting

Both Antony and Campbell love to keep tools work here in a perfect state – right after this meeting they shared progress on allowing to sculpt holes in meshes. That’s much needed by Pablo now, and he’ll get it in a few days.

So – this was just a 2 hour discussion summary. Are we really going to do all of this, or even more? Who knows :) The Mango development target list had quite some of the above topics as well. Time will learn! You’re welcome to help though. Our development project is open (go to blender.org, “get involved”), or support us directly by joining Blender Cloud.

Special thanks to the members of the Development Fund and the 1000s of people who already supported us during the Gooseberry campaign (and who are massively renewing their subscriptions now) – thanks to you we can afford to have a lot of developer and artist powers on tackling it all!

-Ton-

This morning I had a first kick-off meeting with Campbell, Sergey, Lukas and Antony – to evaluate possible development targets for the film. We really hope to be able to tackle a number of issues that already are being postponed too long.

This morning I had a first kick-off meeting with Campbell, Sergey, Lukas and Antony – to evaluate possible development targets for the film. We really hope to be able to tackle a number of issues that already are being postponed too long.

Just wanted to voice my support. All the things listed are excellent development targets, and you have some good developers there, so I’m sure there will be a nice jump in Blenders capabilities in upcoming months :).

Just because of this post I will be joining the cloud finally, it really made me enthusiastic about the Blenders future.

As far as the compositor goes, one might consider to just use the OSS project natron which is relatively new but already seems far superior in many regards. (Especially speed). As much as one would wish for blender’s compositor to finally get there, after so many years one maybe might just face the facts and resort to a really good solution like natron and support this (apparantly well funded) project instead.

Both Natron and Blender’s compositor have good reasons to live happily next to each other. It’s different projects with different focus. Our focus is that of the CG and animation render pipeline – which benefits tremendously of having a compositor directly hooked up.

Natron could try first to win the hearts of filmers and vfx artists. For them Blender is usually a bit too complex or inaccessible.

In 2014 composting most certainly entails 3D. Natron does not have this but Blender has it to an extent that no other software, not even the most expensive commercial software, has. What the Blender Foundation is missing in compositing is to realize just how big a user base VFX is. If only Blender would try to be the great VFX app instead of the great animation app!

I think if Natron and Blender collaborate with one another, the result could end with a relationship like Cinema 4D and After Effects. This may attract many people to what would look like a network of opensource and free software.

Now, don’t get me wrong I think it is good that blender is able to do a collective amount of tasks. However at the end of the day blender focuses on the three dimensional aspect of CGI, meanwhile, Natron focuses on the two dimensional aspect of CGI; or compositing.

So if one takes three major projects: Blender, Natron, and some professional yet free and opensource video editor (Pitivi, Kdenlive, Openshot, ShotCut) and combines their power, one will essentially create an inspiring, motivating, and capturing network of opensource software that has, in my opinion, great potential to surpass corporate software.

Hi Nom, great that you’ve found a combo that works for you! But people here prefer to work with the Blender compositor and editor (the latter being named by opensource.com the best open-source editing software available!) — check out Mathieu’s two editing tutorials for more info: Part 1 and Part 2.

No word on Open Subdiv?

OpenSubdiv will happen, I just called it “real-time Subdivision Surfaces”.

I like this list !

Specially the Rigging, Cycles, Compositing and Pipeline one.

Good job and good luck to devs :)

Wow ! What an impressive feature list ! If you really manage to implement everything in there it’ll be monumental !

+1 for Cycles, compositor, ans sequencer love :)

Good luck to all the team!

I will welcome the possibility to be able to restrict visibility/selection/render to the linked objects in the Outliner. I think it will make much easier to work with them.

Very exciting….some amazing targets on that list! Very much looking forward to Alembic support and object nodes.

I am amazed that RAM caching for the compositor is still not a target. Please consider adding this to the list . Natron may give you some inspiration for this feature :)

Thank you Nvidia for the offer of the cluster use. :)

About “Hair sim editing” – maybe you have some idea after watching video https://www.youtube.com/results?search_query=lightwave+chronosculpt

“Sergey will check on the “Implicit Skinning” code – which should be available as GPL-compatible soon.”

Alot of things on the list we already knew and were excited but this one, this is so nice.

Nice targets indeed.

What about also shape keys as a modifiers?

What is that? Is there a proposal or design doc?

Hi Ton,

no, just a suggestion of the moment; I mean, we have particles, cloth and softbody as modifiers, wouldn’t be useful the same thing for shape keys?

I remember a patch for a modifier that work as morpher from max or shape keys for blender, it moves the vertices of the mesh using a deformed copy of the mesh. . .

or was i drunk . . . no, no , i saw it

You are right, I remember it as well!

And here it is:

http://blenderartists.org/forum/showthread.php?337131-Updated-Morph-Target-Modifier-by-kkar&highlight=morph+modifier

wish to protest about the “TEXT” tool

this tool does not have an update since the release of Blender 2:46

which is the version that started in Blender.

why?

For those who need to work with vignettes for TV (only with texts) have difficulty …

is shame to have to appeal to another 3D program …

I use Cinema 4D

It’s quite unclear what you mean with the ‘TEXT’ tool. You mean the 3d object type, which allows editing text labels and so?

In general – videographics design in Blender is quite behind what Cinema4d offers yes – they’ve sortof specialized in it. I don’t see a quick solution for this really – we first need people on board who fully understand what tools are needed for it, and how to design and build them.

As a motion graphics artist (mainly c4d) I am very impressed with what is being developed in the MotionTool addon for Blender:

http://cgcookiemarkets.com/blender/all-products/motiontool/?ref=27

In some ways this is more advanced than the cloner/effector method used in C4D. Hopefully Lukas can use this addon as some inspiration for the object node tools, as it would be great to have this functionality in trunk.

I agree, it looks good, but it has a long way to go still, it’s only beta and performance could be better.. starts to lag with a bit more complex objects.

I haven’t tried it yet, as I was waiting for it to come out of beta. Since it is just python nodes, I would imagine there is a lot of slow down on complex projects, which is why we need these kinds of tools/workflows need to be written in c. Likewise sverchok looks cool, but we need these tools in trunk.

Hopefully the object nodes work gets a higher priority later on in the Gooseberry development, as Lukas can’t do much with only a half day a week. With all the bad press Autodesk is getting with their upcoming subs scheme and the recent death of Softimage hopefully more developers will flock to Blender to help develop the nodal side!

All great stuff – I saw a talk on implicit skinning earlier this year and it looked great, and a good compromise for getting proper skin motion.

However, am insanely glad to see that pipeline proposal and that its high priority – doing the pipeline with college students has been driving me crazy every time we run 3Dami (3dami.org) as its so fragile and I spend much of my week fixing issues. Please imagine a room of puppy dogs all looking cute and urging you to have that in Blender master for summer next year;-)

btw – is there any plan for compositor-video editor integration? What I really want is to be able to use node groups as effects and transitions (For transitions you could have a node group with two images and a parameter that is automatically animated from 0 to 1 as input, one image as output. Yeah, it needs a bit more design than that, but I am sure you get the idea.).

Sequencer is meant to be real time for shots.

Compositor is meant to be real time for frames.

Both approaches mean quite a different design and software architecture for things. Which is good, that means that for sequencer you can focus on speed, and for compositor on quality.

Having a compo node tree in the sequencer could work but could also create big bottlenecks (and possibly grinding sequencer down to become very slow). I rather see other solutions then.

I can see the logic of that, though can’t help but observe that the sequencer does a good job of using an OpenGl preview if you drop in a scene, flipping to a render when you tell it to generate actual output – a similar approach could be used, even if its to only show the effect via proxies or even only in the final render. Your right that it needs some thought to work out a good compromise between flexibility/performance though.

Regardless, its good to see the video editor getting some work, and it would be great to get even more power into it – right now (at least for me) its often *almost* enough, and your forced to do something much more involved to achieve a desired goal, rather than something only a little more involved.

Everything on this list sounds sweet. Especially Viewport upgrade, Cycles stuff nadn pipeline related things.

But mostly I’m curious about Object nodes. Well… I think this can have super great potential in procedural modeling but I’m afraid there is strong possibility that this it can be wasted somehow. Nodes like that can based on current system can still be nice but imho you guys should create comprehensive design doc about it and discussion with community. To be exact, all tech-artist, riggers, R&D guys.

Blender modifiers are nice but have one flaw compared to mesh modeling operators. Context of operation. Modifier can process only whole mesh or selected vertex group taken from mesh data.

Imagine situation when meshes data have not only Vertex groups but also Face groups and Edge groups. Next thing, dynamically created context from previous modifiers/modeling operators. From one extrude operation we can create various context like, previously selected faces, newly created boundary faces, all modufied faces, all edges paralell to extrude direction and so on.

Maybe this is bigger target. Maybe even something for blender 3.0. I hope eventually blender will get powerfull procedural modeling nodes. :)

Nice Targets

Thank you!

What a list it’s to long I think on the way I learned to focus on a couple of them and make that the best tools there is rather a list with features programmed with open ends.

Overall happy to read all the progress well done devs

Great news about the “custom manipulator” system, this would simplify the handling of the rigs. This is a very interesting project that bintenta the same objective: https://www.youtube.com/watch?v=OI81pu4KzgU

Que viva el software libre!!!

>Well – basically we only have 1 real problem with Cycles rendering. Speed!

I totally agree. If cycles can render with at least 70% (well, better, of course – more) on the speed https://corona-renderer.com/ (Here is an example of it because they are similar technologically and thus both can operate at CPU) have no doubt that they are interested in a great number of people at once, immediately they write connectors to 3dsmax, maya … etc. There are many good renders but still wins vray\corona by speed\quality\capability. Speed is everything (or a lot).

Nice to see the depsgraph finally taking care of !

Are armature and bone part of the object node project ?

The object node project looks awesome too but I hope the Dependency graph will be prioritized first as this is really a big issue for rigging.

What is the expected timeframe for this list?

It is pretty interesting, and I think a lot of people may be thinking embracing Blender based on the recent commercial news.

Cheers!

pipeline improvements is important good forward!

also bullet’s position-based (IIRC) cloth should be useful.

btw you might considered but I suggest them:

– bidir/VCM for faster Cycles (time-memory tradeoff)

– Marschner hair model for colored hair

Hey , love the news on the hair particles. the lack of collision is really frustrating and makes it almost impossible to animate a charachter with long hair . hope this will come soon

Thank you for your great work on the simulations. It’s sounds very, very promising. Keep up your excelent work please. Kind regards Markus

We very glad to see such goals. Especially Alembic. Will there be a goal in cloth simulation tearing?